Cutting GSTR-3B filing time by 87%

India's GST regime requires 1.4 crore businesses to file GSTR-3B returns every month. Finance teams at scale were losing five days a month to a process built for single filings. I led the redesign — and discovered, three months post-launch, that our success metrics were lying to us.

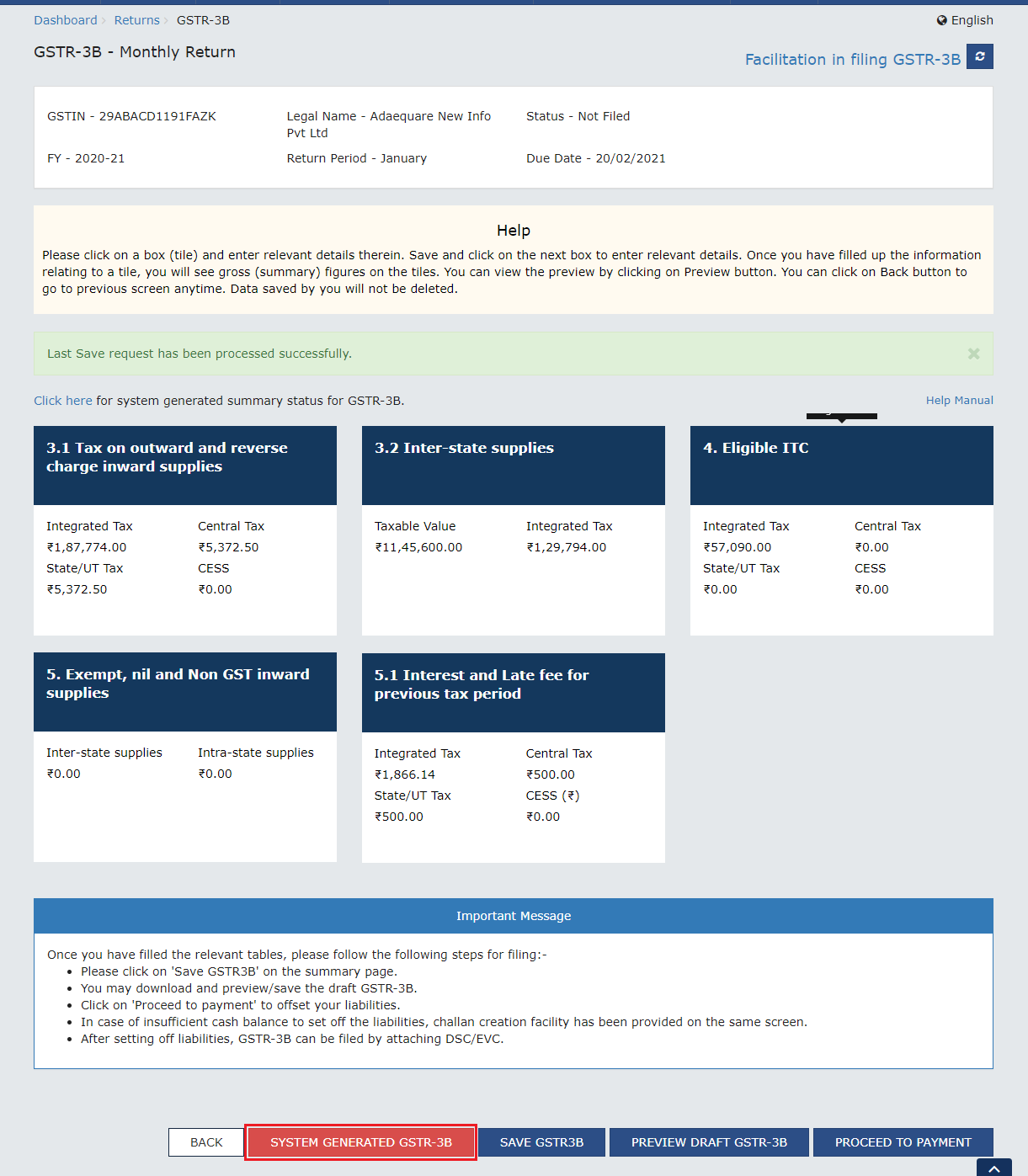

The platform was anti-scale

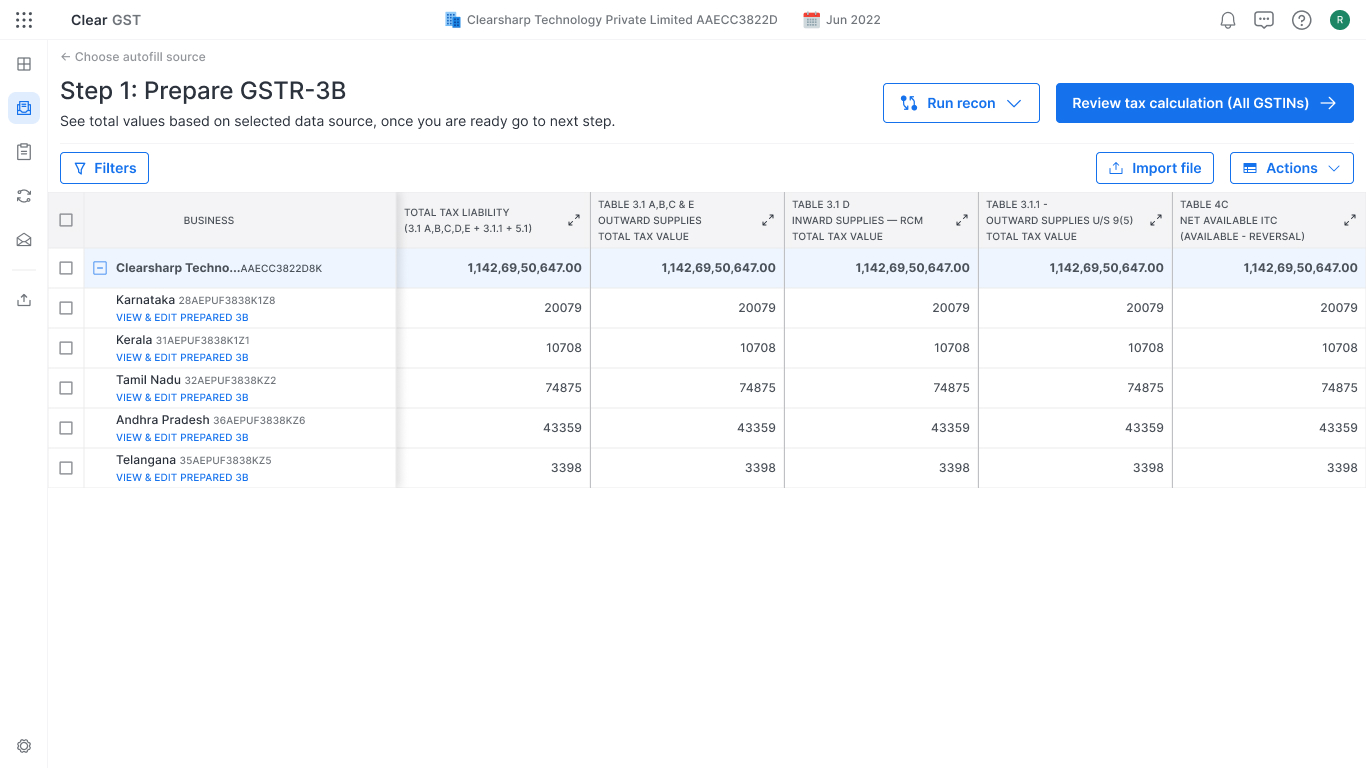

Clear’s GST product worked beautifully for a single business filing a single return. The problem was that real customers had dozens. In India, a company operating across multiple states needs a separate GST registration (GSTIN) per state — and the government portal, plus Clear’s existing flow, forced finance teams to file one registration at a time. A monthly compliance task turned into a five-day ordeal for any company with more than ten branches.

“My team of six spends five full days every month filing for 120 registrations. We make mistakes because the process creates mistakes.” — Sunita, CFO · Manufacturing Firm

Three patterns kept showing up across 50 interviews

We ran 50+ user interviews across MSMEs, enterprises, and tax consultants, analysed 500+ support tickets, and ran usability tests on the existing platform. The pain didn’t fragment — it concentrated around scale, trust, and tooling.

“I download the recon to Excel, create pivot tables, and cross-check. Your platform’s logic is a black box.” — Meera, Tax Consultant · 30+ clients

Of enterprises with 10+ registrations (GSTINs) cited multi-filing as their #1 pain point. The platform was built for one entity at a time — there was no concept of a batch.

Of all support tickets were ITC reconciliation mismatches. The matching logic was opaque — users couldn't see why a row failed, only that it had.

Of users were copying data from Excel into the portal. Filtering, grouping, and bulk actions didn't exist in product, so finance teams escaped to spreadsheets.

We rebuilt the platform around batches, not single returns

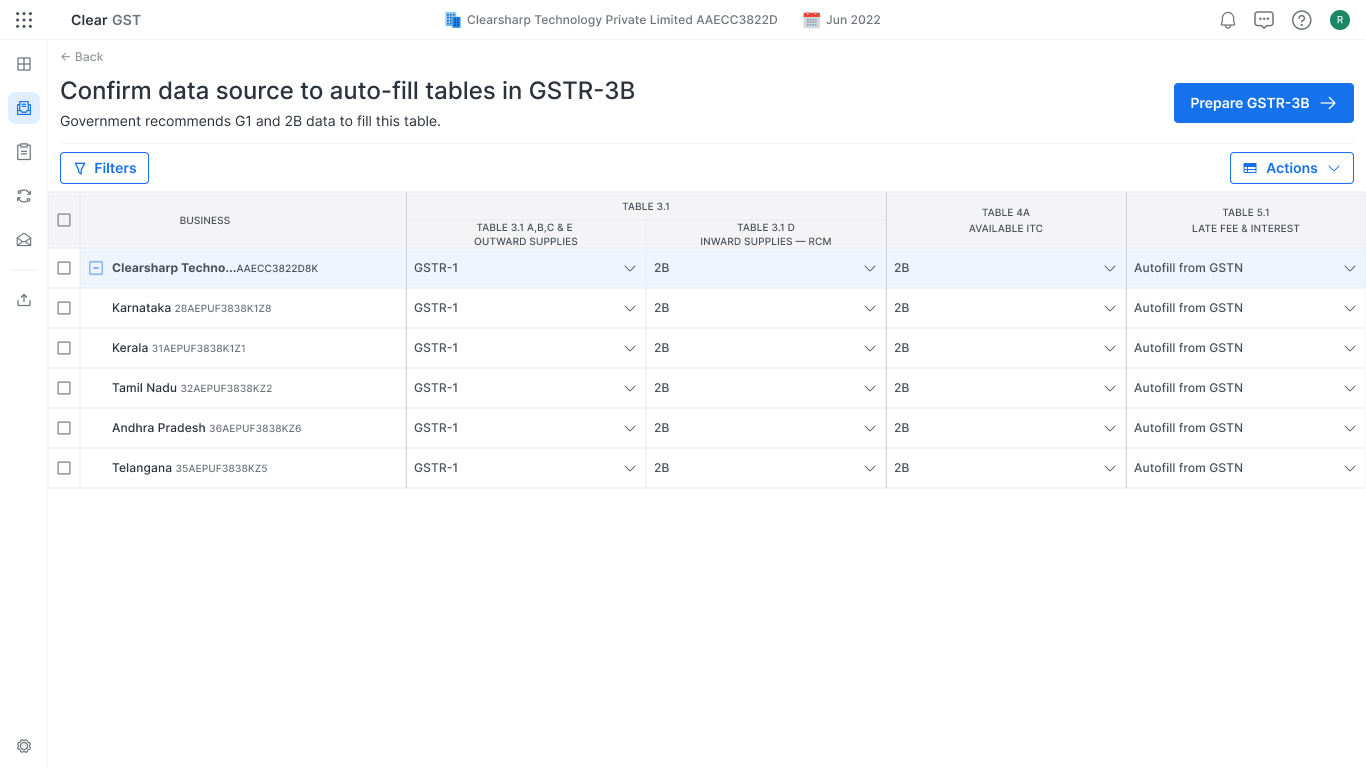

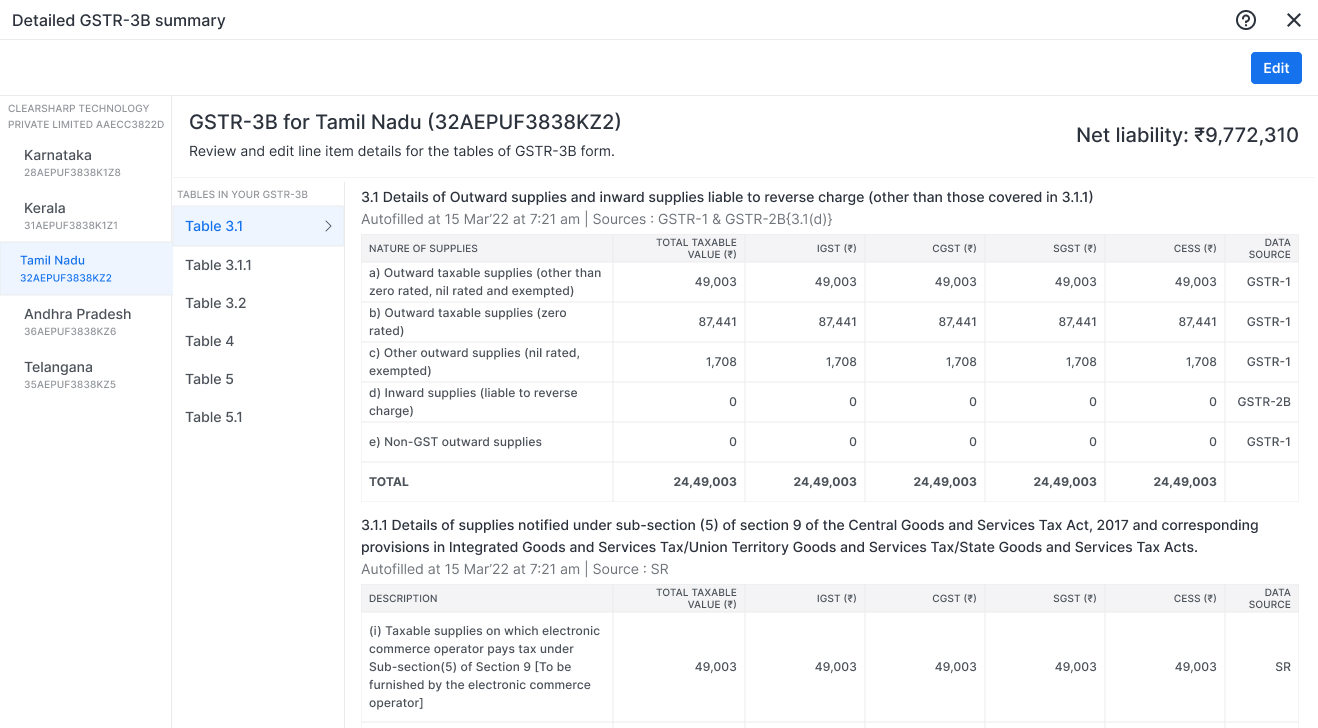

The redesign hinged on three structural shifts: bring scale to the dashboard, move reconciliation out of Excel and into the product, and let data sources be configured once and reused across all registrations. The IA was anchored around six steps from landing through filing, but the visible craft sat in two screens — the multi-registration dashboard and the reconciliation engine.

The dashboard collapsed what used to be a 5-day, registration-by-registration workflow into a single view. Status across every registration, batch actions, drill-down to invoice level when needed.

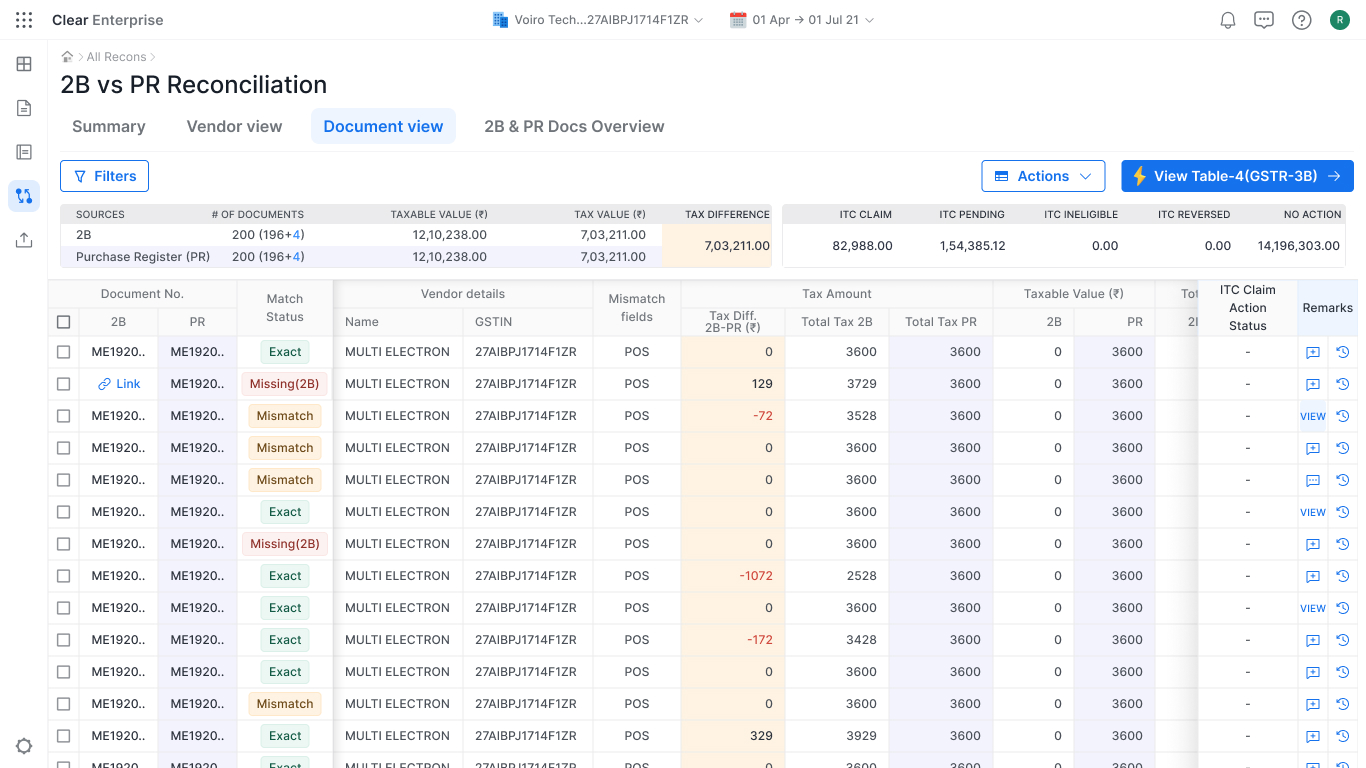

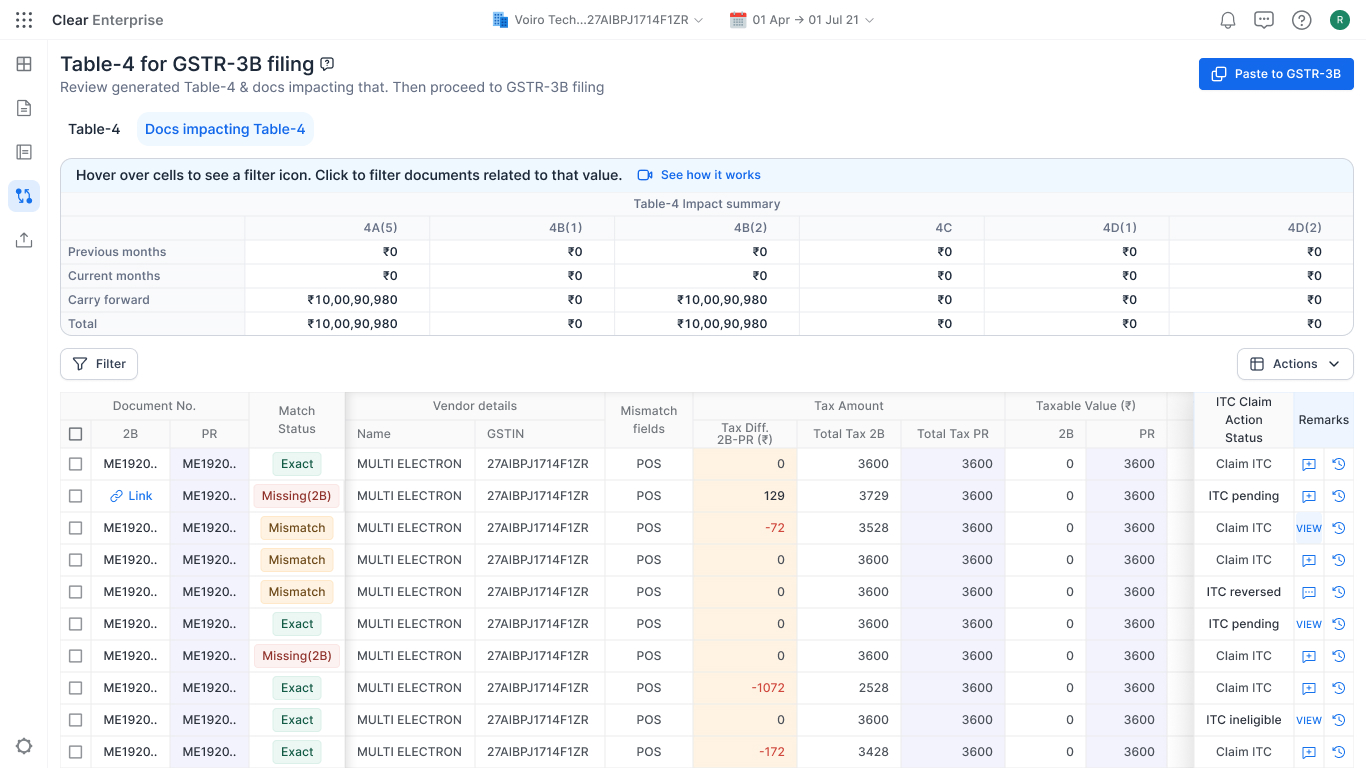

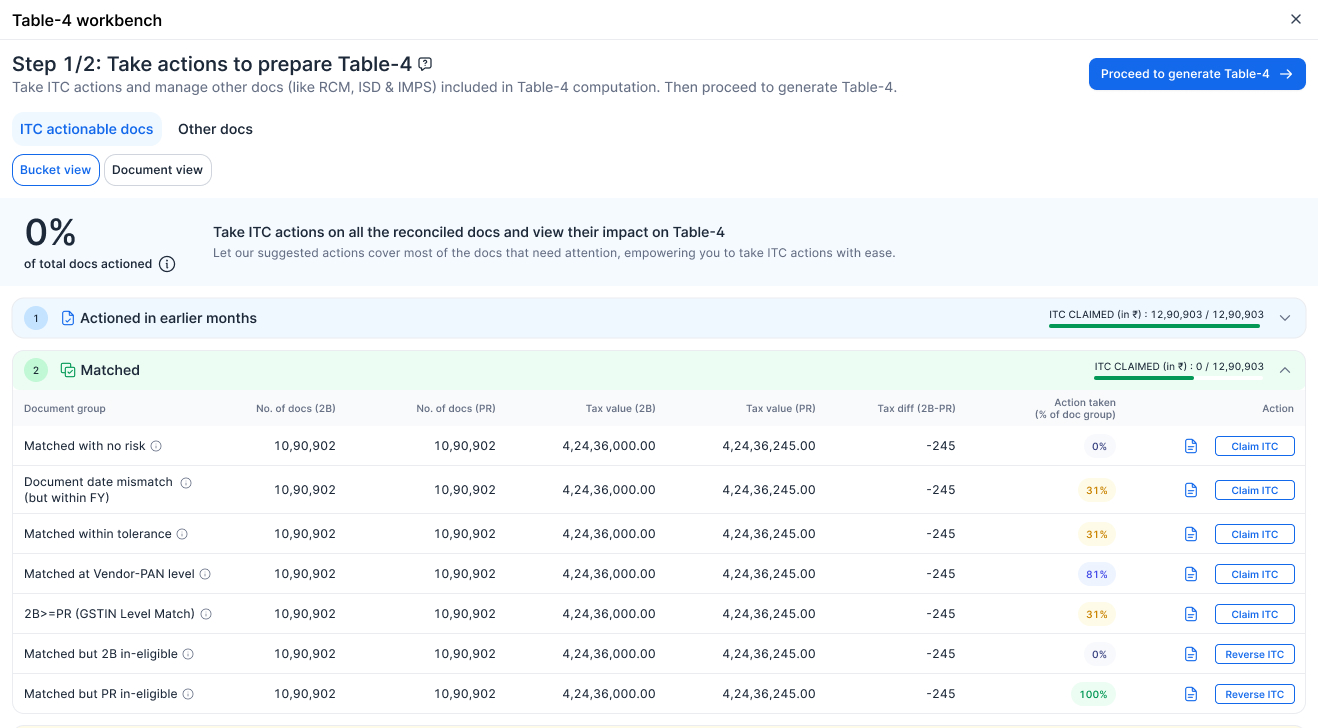

Reconciliation moved out of Excel and into the product

ITC reconciliation generated most of the support load — 65% of all tickets. Users were rebuilding pivot tables in Excel because the platform couldn’t compare GSTR-2B against their Purchase Register at scale. We built a split-view recon engine with vendor grouping, partial-invoice matching, and bulk approve/reject for 100+ invoices at a time.

We launched. The numbers came back fast.

Clear GST 2.0 shipped in early 2023. Adoption jumped from 18% to 42%. Filing time dropped from over an hour to under ten minutes. Annual revenue grew 67%. The team celebrated.

I wasn’t comfortable.

| GSTR-3B Adoption · launch month | Before: 18% After: 42% |

| Filing Time per Registration | Before: 1hr After: <10min |

| Annual Revenue · Clear GST | +67% |

| CSM Tickets / Month | Before: ~400 After: ~350 |

Adoption ≠ Proficiency

Three months in, I went back into the field. Not surveys, not analytics — actual shadowing. I watched customers file. I asked one question: “How would you solve this without the platform?”

The answers broke the launch narrative open. CSMs, under pressure to hit adoption targets, were doing tasks for users to mark them as “active.” People were filing — but they weren’t getting better at the platform. They were getting handheld through the same blockers every month, and reverting to Excel the moment the CSM left the call. The dashboards weren’t lying. They just weren’t measuring the right thing.

- 100% Started filing

- 53% Completed Data Source step

- 11% Completed Table 4 in-platformthe drop — where the platform stopped being trusted

- 89% Opened Excel mid-flowwhere users actually went

Three-month post-launch funnel — adoption looked healthy on dashboards, but only 11% finished in-platform; 89% reverted to Excel mid-flow.

The platform was being used. It wasn’t being trusted.

The first fix was contextual, not feature-driven

The standalone Data Source setup page felt like a gatekeeper, not a workflow enabler. We embedded the controls directly into the GSTR-3B form, adjustable while reviewing Table-4. Same controls, different context. Drop-off went from 47% to near-zero.

“Finally, I can adjust sources right where I need them.” — Deepak, MSME Owner

The black box became glass

Reconciliation decisions used to feel like inputs into a void. Users couldn’t see how a single accept or reject changed their ITC computation, so they didn’t trust the auto-fills and rebuilt everything in Excel. We added a live Table-4 preview that updated in real time as users worked through the recon — every decision, immediately visible in the cell it affected.

“The live previews made Table-4 feel less like a mystery.” — Meera, Tax Consultant

Excel finally became optional

We added native filtering, grouping, sub-grouping, and bulk actions on the recon table. The pivot table workflow finally lived inside the product. Filing time per registration batch dropped from 30 minutes to 2 minutes, and the Excel revert rate fell off the funnel entirely.

Results & Impact

Three phases. The third one is the one that counts.

| Metric | Clear GST 1.0 | 2.0 Launch | 2.0 Post-Iteration |

|---|---|---|---|

| GSTR-3B adoption | 18% | 42% | 53% |

| Filing time per registration | 1 hour | < 10 min | < 8 min |

| Registrations (GSTINs) per customer | 4–5 | 12–15 | 12–15 |

| Reconciliation accuracy | 80% | 80–92% | 94% |

| CSM tickets / month | ~400 | ~350 | ~110 |

The launch column looks good. The post-iteration column is what made the product real.

Key Learning

Adoption ≠ Proficiency. Logins, click-through, and CSM check-ins all said the launch was working. Three months in, I sat with users and watched them paste batches back into Excel the moment a CSM dropped off the call. The dashboards weren’t lying — they were measuring the wrong thing. The fixes that made GSTR-3B real came from an hour of shadowing, not from the launch numbers.